This is the main branch documentation

This is the multi-page printable view of this section. Click here to print.

Develop Documentation

- 1: Architecture

- 1.1: Api Components

- 2: Getting Started

- 3: Cloud Provider

- 3.1: Amazon Web Services

- 3.2: Azure

- 3.3: Civo

- 3.4: Google Cloud Platform

- 3.5: Local

- 4: Reference

- 5: Contribution Guidelines

- 5.1: Contribution Guidelines for CLI

- 5.2: Contribution Guidelines for Core

- 5.3: Contribution Guidelines for Docs

- 6: Concepts

- 6.1: Cloud Controller

- 6.2: Core Manager

- 6.3: Distribution Controller

- 7: Ksctl Components

- 7.1: Ksctl Agent

- 7.2: Ksctl Application Controller

- 7.3: Ksctl State-Importer

- 8: Kubernetes Distributions

- 9: Storage

- 9.1: External Storage

- 9.2: Local Storage

1 - Architecture

Architecture diagrams

1.1 - Api Components

Core Design Components

Design

Overview architecture of ksctl

Managed Cluster creation & deletion

High Available Cluster creation & deletion

2 - Getting Started

Getting Started Documentation

Installation & Uninstallation Instructions

Ksctl CLI

Lets begin with installation of the tools their are various method

Single command method

Install

Steps to Install Ksctl cli toolcurl -sfL https://get.ksctl.com | python3 -

Uninstall

Steps to Uninstall Ksctl cli toolbash <(curl -s https://raw.githubusercontent.com/ksctl/cli/main/scripts/uninstall.sh)

zsh <(curl -s https://raw.githubusercontent.com/ksctl/cli/main/scripts/uninstall.sh)

From Source Code

Caution!

Under-Development binariesNote

The Binaries to testing ksctl cli is available in ksctl/cli repomake install_linux

# macOS on M1

make install_macos

# macOS on INTEL

make install_macos_intel

# For uninstalling

make uninstall

Demo for the ksctl installation

3 - Cloud Provider

This Page includes more info about different cloud providers

3.1 - Amazon Web Services

Aws support for HA and Managed Clusters

Caution

we need credentials to access clusters

these are confidential information so shouldn’t be shared with anyone

How these credentials are used by ksctl

- Environment Variables

export AWS_ACCESS_KEY_ID=""

export AWS_SECRET_ACCESS_KEY=""

- Using command line

ksctl cred

Current Features

Cluster features

Highly Available cluster

clusters which are managed by the user not by cloud provider

you can choose between k3s and kubeadm as your bootstrap tool

custom components being used

- Etcd database VM

- HAProxy loadbalancer VM for controlplane nodes

- controlplane VMs

- workerplane VMs

Managed Cluster Elastic Kubernetes Service

we provision Roles ksctl-* 2 for each cluster:

ksctl-<clustername>-wp-rolefor the EKS NodePoolksctl-<clustername>-cp-rolefor the EKS controlplane

we utilize the iam:AssumeRole to assume the role and create the cluster

Policies aka permissions for the user

here is the policy and role which we are using

- iam-role-full-access(Custom Policy)

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor6",

"Effect": "Allow",

"Action": [

"iam:CreateInstanceProfile",

"iam:DeleteInstanceProfile",

"iam:GetRole",

"iam:GetInstanceProfile",

"iam:RemoveRoleFromInstanceProfile",

"iam:CreateRole",

"iam:DeleteRole",

"iam:AttachRolePolicy",

"iam:PutRolePolicy",

"iam:ListInstanceProfiles",

"iam:AddRoleToInstanceProfile",

"iam:ListInstanceProfilesForRole",

"iam:PassRole",

"iam:CreateServiceLinkedRole",

"iam:DetachRolePolicy",

"iam:DeleteRolePolicy",

"iam:DeleteServiceLinkedRole",

"iam:GetRolePolicy",

"iam:SetSecurityTokenServicePreferences"

],

"Resource": [

"arn:aws:iam::*:role/ksctl-*",

"arn:aws:iam::*:instance-profile/*"

]

}

]

}

- eks-full-access(Custom Policy)

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "VisualEditor0",

"Effect": "Allow",

"Action": [

"eks:ListNodegroups",

"eks:ListClusters",

"eks:*"

],

"Resource": "*"

}

]

}

- AmazonEC2FullAccess(Aws)

- IAMReadOnlyAccess(Aws)

Validaty of Kubeconfig

The Kubeconfig generated after you ran

ksctl switch aws --name here-you-go --region us-east-1

we are using sst token to authenticate with the cluster, so the kubeconfig is valid for 15 minutes

once you see that there is a error of unauthorized then you need to re-run the above command

3.2 - Azure

Azure support for HA and Managed Clusters

Caution

we need credentials to access clusters

these are confidential information so shouldn’t be shared with anyone

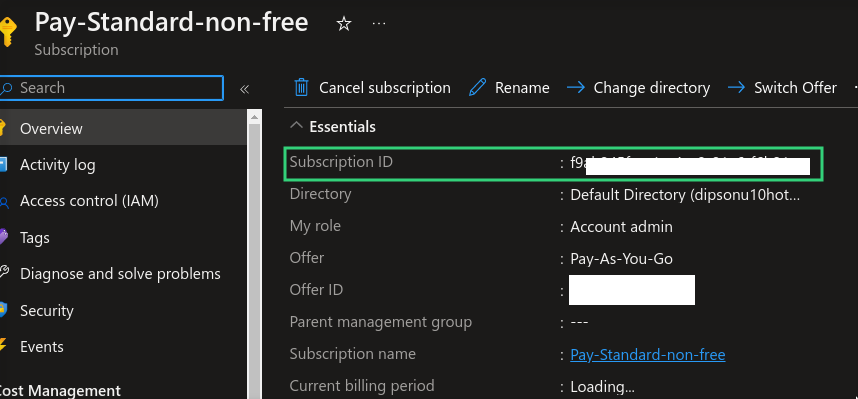

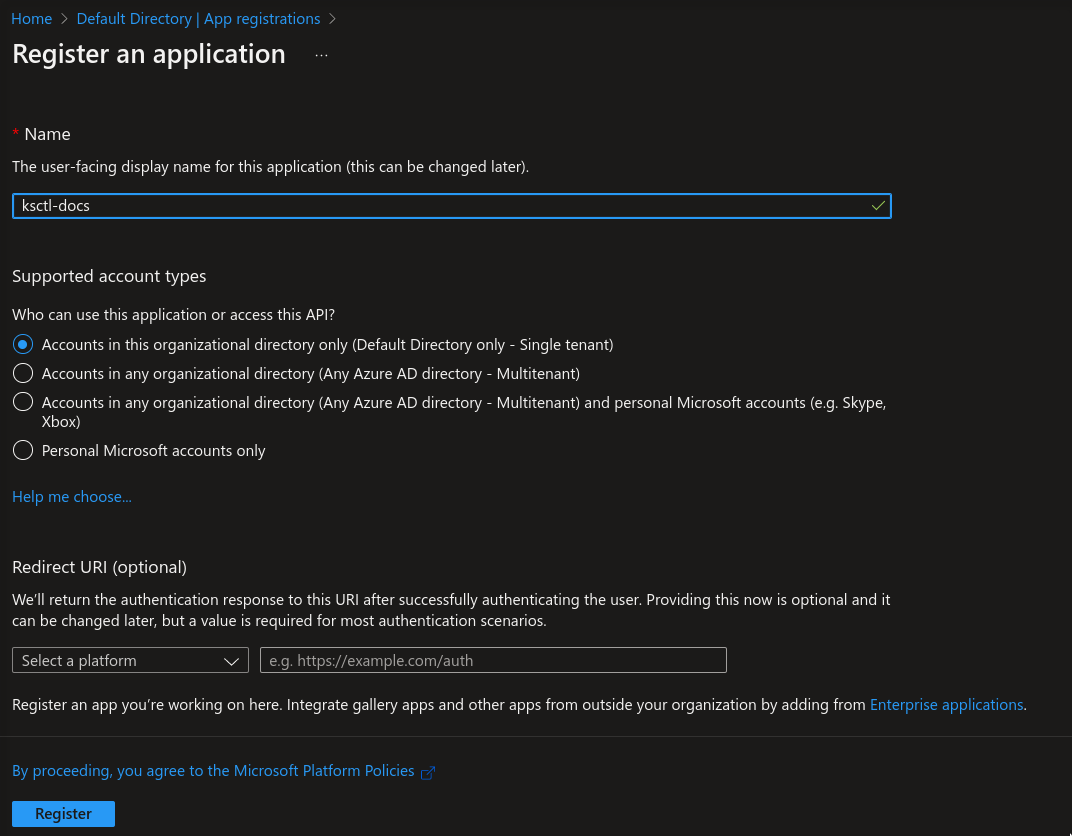

Azure Subscription ID

subscription id using your subscription

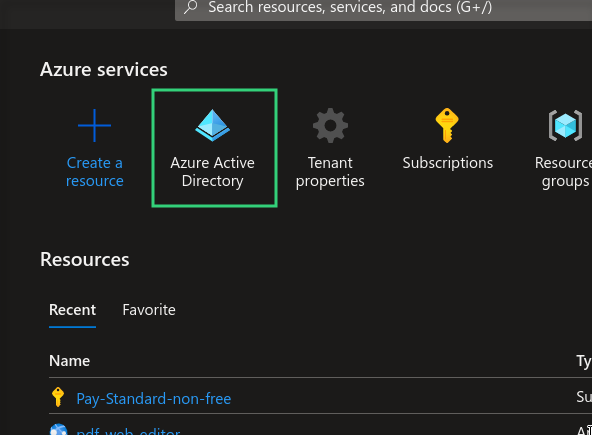

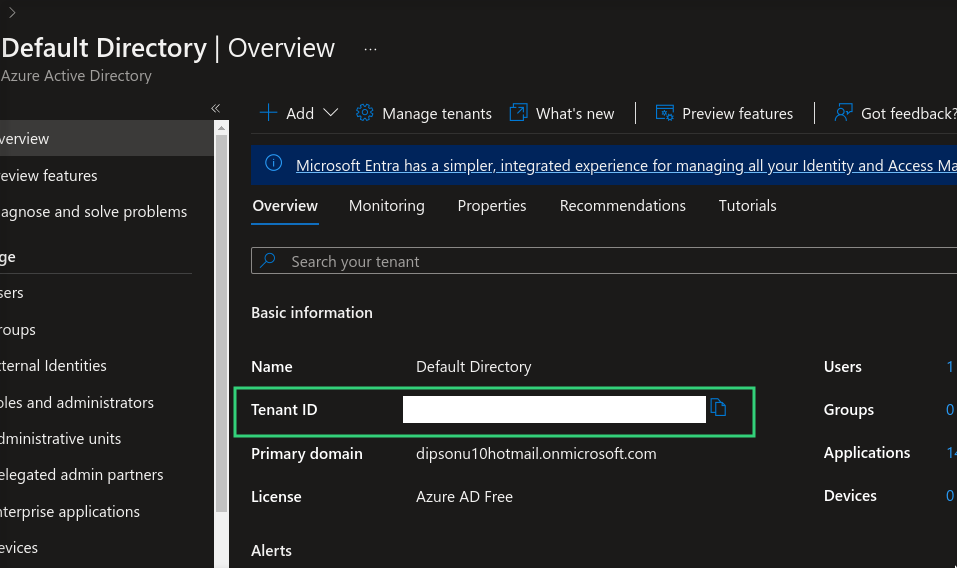

Azure Tenant ID

Azure Dashboard

Azure Dashboard contains all the credentials required

lets get the tenant id from the Azure

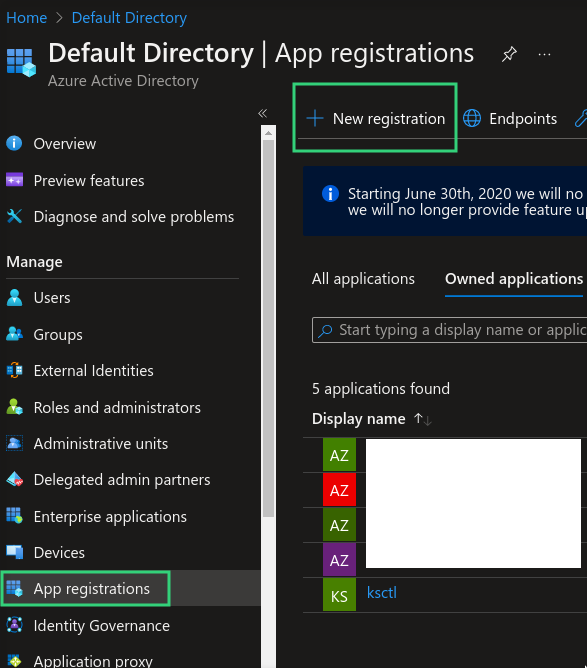

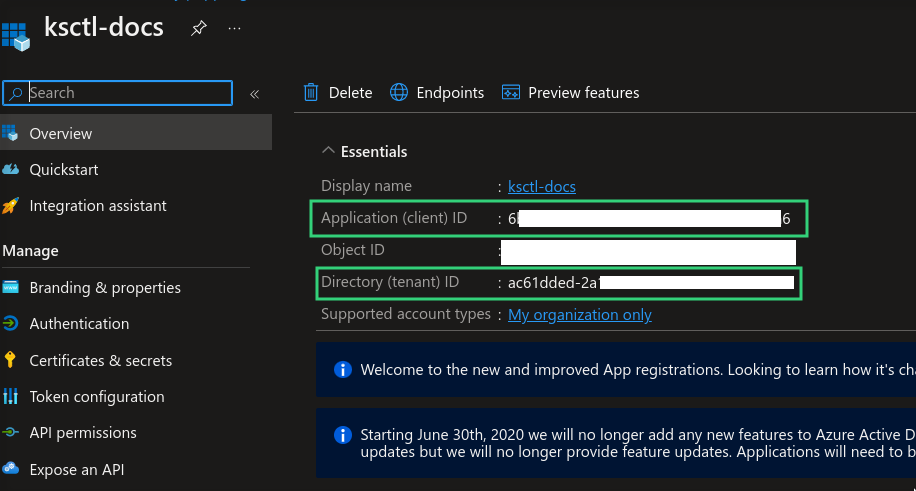

Azure Client ID

it represents the id of app created

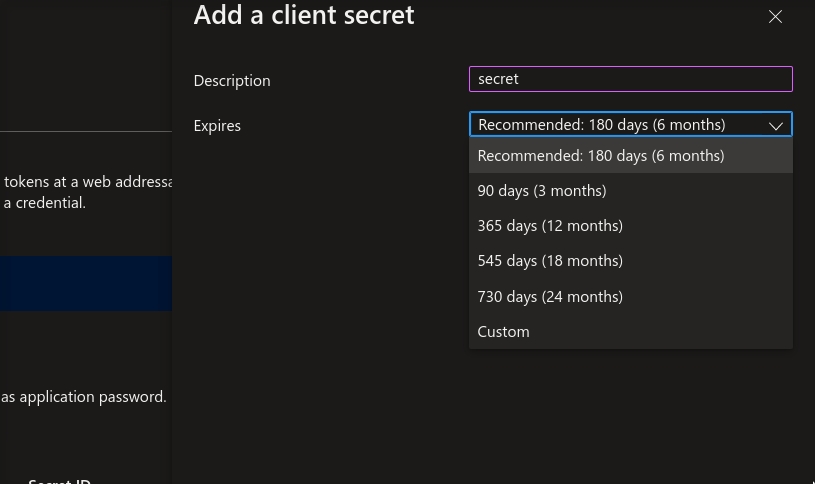

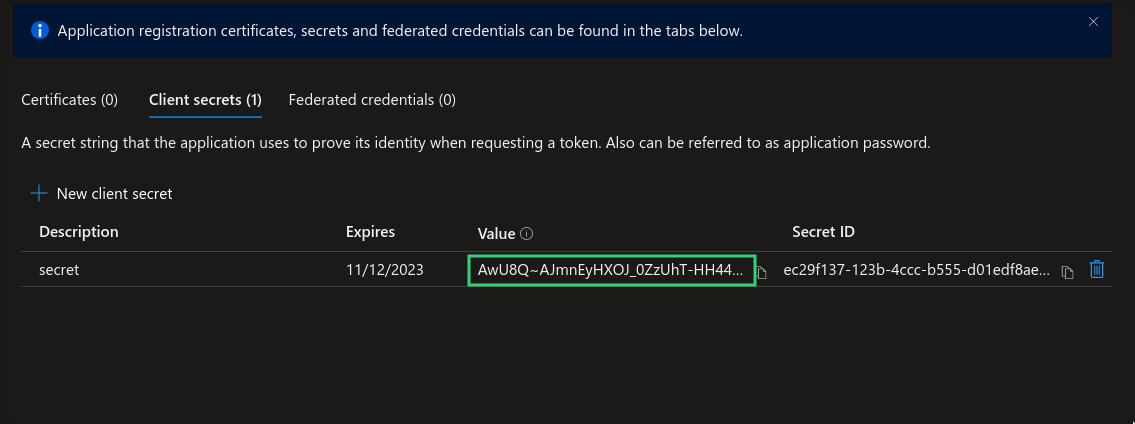

Azure Client Secret

it represents the secret associated with the app in order to use it

Assign Role to your app

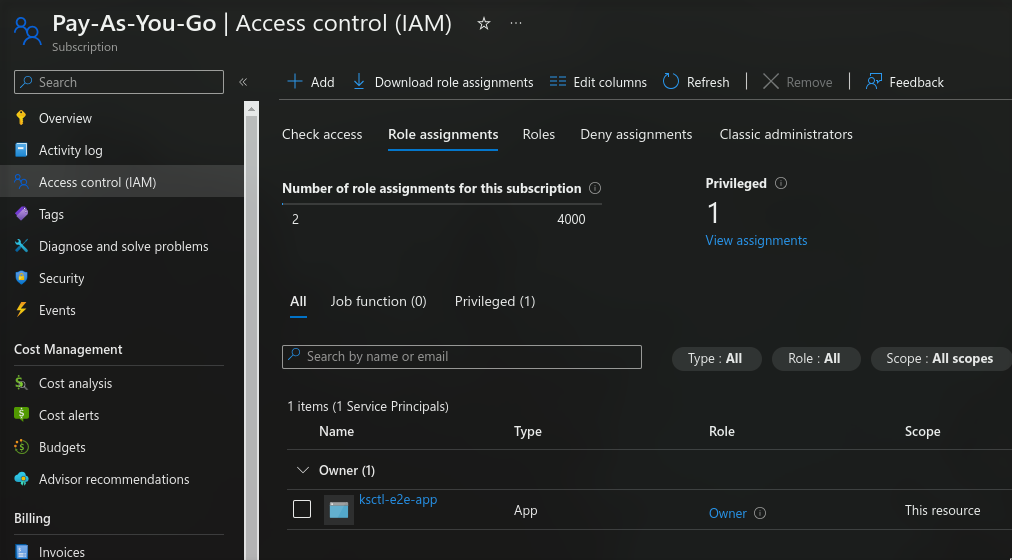

head over to subscriptions page and click Access Control (IAM) select the Role Assignment and then click Add > Add Role Assignment create a new role and when selecting the identity specify the name of the app Here you can customize the role this app has

How these credentials are used by ksctl

- Environment Variables

export AZURE_TENANT_ID=""

export AZURE_SUBSCRIPTION_ID=""

export AZURE_CLIENT_ID=""

export AZURE_CLIENT_SECRET=""

- Using command line

ksctl cred

Current Features

Cluster features

Highly Available cluster

clusters which are managed by the user not by cloud provider

you can choose between k3s and kubeadm as your bootstrap tool

custom components being used

- Etcd database VM

- HAProxy loadbalancer VM for controlplane nodes

- controlplane VMs

- workerplane VMs

Managed Cluster

clusters which are managed by the cloud provider

Other capabilities

Create, Update, Delete, Switch

Update the cluster infrastructure

Managed cluster: till now it’s not supported

HA cluster

- addition and deletion of new workerplane node

- SSH access to each cluster node (DB, LB, Controplane, WorkerPlane) Public Access, secured by private key

Managed Cluster

Highly Available Cluster

3.3 - Civo

Civo support for HA and Managed Clusters

Caution

we need credentials to access clusters

these are confidential information so shouldn’t be shared with anyone

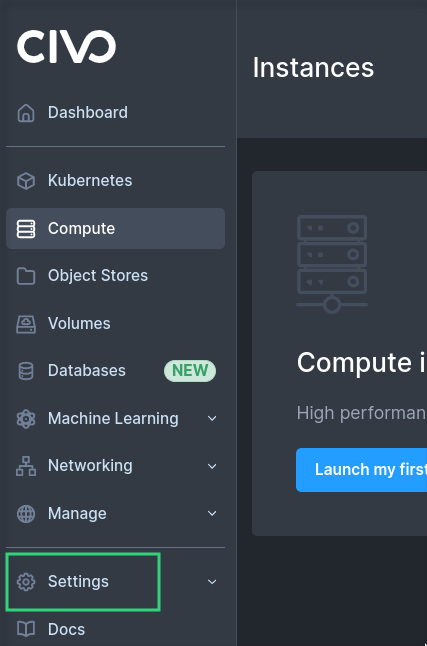

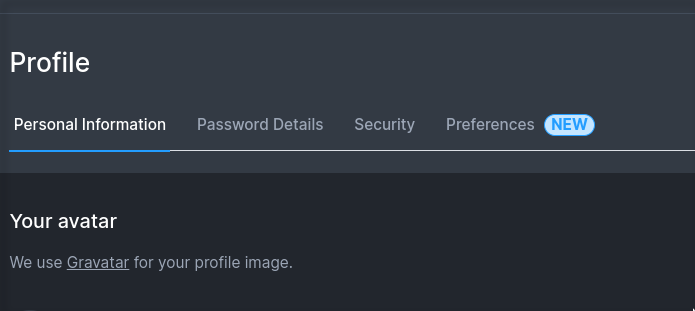

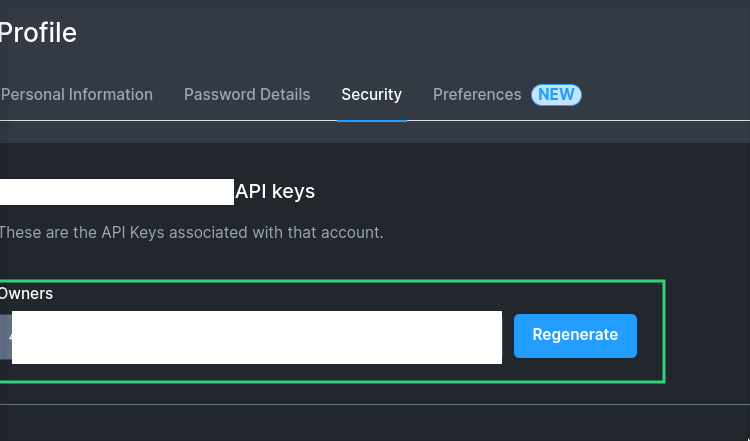

Getting credentials

under settings look for the profile

copy the credentials

How to add credentials to ksctl

- Environment Variables

export CIVO_TOKEN=""

- Using command line

ksctl cred

Current Features

Cluster features

Highly Available cluster

clusters which are managed by the user not by cloud provider

you can choose between k3s and kubeadm as your bootstrap tool

custom components being used

- Etcd database VM

- HAProxy loadbalancer instance for controlplane nodes

- controlplane instances

- workerplane instances

Managed Cluster

clusters which are managed by the cloud provider

Other capabilities

Create, Update, Delete, Switch

Update the cluster infrastructure

Managed cluster: till now it’s not supported

HA cluster

- addition and deletion of new workerplane node

- SSH access to each cluster node (DB, LB, Controplane) Public Access, secured by private key

- SSH access to each workplane Private Access via local network, secured by private key

Managed Cluster

Highly Available Cluster

3.4 - Google Cloud Platform

Gcp support for HA and Managed Clusters

Caution

we need credentials to access clusters

these are confidential information so shouldn’t be shared with anyone

3.5 - Local

It creates cluster on the host machine utilizing kind

Note

Prequisites: DockerCurrent features

currently using Kind Kubernetes in Docker

About HA Cluster

local system are constrained to fewer CPUs and memory so no HA cluster4 - Reference

4.1 - Reference for command line reference

for the Ksctl cli

CLI Command Reference

Docs are available now in cli repo Here are the links for the documentation files

Markdown format RichText format

Info

These cli commands are available with cli specific versions from [email protected] onwards v1.2.0 cli references5 - Contribution Guidelines

You can do almost all the tests in your local except e2e tests which requires you to provide cloud credentials

Provide a generic tasks for new and existing contributors

Types of changes

There are many ways to contribute to the ksctl project. Here are a few examples:

- New changes to docs: You can contribute by writing new documentation, fixing typos, or improving the clarity of existing documentation.

- New features: You can contribute by proposing new features, implementing new features, or fixing bugs.

- Cloud support: You can contribute by adding support for new cloud providers.

- Kubernetes distribution support: You can contribute by adding support for new Kubernetes distributions.

Phases a change / feature goes through

- Raise a issue regarding it (used for prioritizing)

- what all changes does it demands

- if all goes well you will be assigned

- If its about adding Cloud Support then usages of CloudFactory is needed and sperate the logic of vm, firewall, etc. to their respective files and do have a helper file for behind the scenes logic for ease of use

- If its about adding Distribution support do check its compatability with different cloud providers vm configs and firewall rules which needs to be done

Formating for PR & Issue subject line

Subject / Title

# Releated to enhancement

enhancement: <Title>

# Related to feature

feat: <Title>

# Related to Bug fix or other types of fixes

fix: <Title>

# Related to update

update: <Title>

Body

Follow the PR or Issue template add all the significant changes to the PR description

Commit messages

mention the detailed description in the git commits. what? why? How?

Each commit must be sign-off and should follow conventional commit guidelines.

Conventional Commits

The commit message should be structured as follows:

<type>(optional scope): <description>

[optional body]

[optional footer(s)]

For more detailed information on conventional commits, you can refer to the official Conventional Commits specification.

Sign-off

Each commit must be signed-off. You can do this by adding a sign-off line to your commit messages. When committing changes in your local branch, add the -S flag to the git commit command:

$ git commit -S -m "YOUR_COMMIT_MESSAGE"

# Creates a signed commit

You can find more comprehensive details on how to sign off git commits by referring to the GitHub section on signing commits.

Verification of Commit Signatures

You have the option to sign commits and tags locally, which adds a layer of assurance regarding the origin of your changes. GitHub designates commits or tags as either “Verified” or “Partially verified” if they possess a GPG, SSH, or S/MIME signature that is cryptographically valid.

GPG Commit Signature Verification

To sign commits using GPG and ensure their verification on GitHub, adhere to these steps:

- Check for existing GPG keys.

- Generate a new GPG key.

- Add the GPG key to your GitHub account.

- Inform Git about your signing key.

- Proceed to sign commits.

SSH Commit Signature Verification

To sign commits using SSH and ensure their verification on GitHub, follow these steps:

- Check for existing SSH keys.

- Generate a new SSH key.

- Add an SSH signing key to your GitHub account.

- Inform Git about your signing key.

- Proceed to sign commits.

S/MIME Commit Signature Verification

To sign commits using S/MIME and ensure their verification on GitHub, follow these steps:

- Inform Git about your signing key.

- Proceed to sign commits.

For more detailed instructions, refer to GitHub’s documentation on commit signature verification

Development

First you have to fork the ksctl repository. fork

cd <path> # to you directory where you want to clone ksctl

mkdir <directory name> # create a directory

cd <directory name> # go inside the directory

git clone https://github.com/${YOUR_GITHUB_USERNAME}/ksctl.git # clone you fork repository

cd ksctl # go inside the ksctl directory

git remote add upstream https://github.com/ksctl/ksctl.git # set upstream

git remote set-url --push upstream no_push # no push to upstream

Trying out code changes

Before submitting a code change, it is important to test your changes thoroughly. You can do this by running the unit tests and integration tests.

Submitting changes

Once you have tested your changes, you can submit them to the ksctl project by creating a pull request. Make sure you use the provided PR template

Getting help

If you need help contributing to the ksctl project, you can ask for help on the kubesimplify Discord server, ksctl-cli channel or else raise issue or discussion

Thank you for contributing!

We appreciate your contributions to the ksctl project!

Some of our contributors ksctl contributors

5.1 - Contribution Guidelines for CLI

Repository: ksctl/cli

How to Build from source

Linux

make install_linux # for linux

Mac OS

make install_macos # for macos

Windows

.\builder.ps1 # for windows

5.2 - Contribution Guidelines for Core

Repository: ksctl/ksctl

Test out both All Mock and Unit tests and lints

make test

Test out both All Unit tests

make unit_test_all

Test out both All Mock tests

make mock_all

for E2E tests on local

set the required token as ENV vars then

cd test/e2e

# then the syntax for running

go run . -op create -file azure/create.json

# for operations you can refer file test/e2e/consts.go

5.3 - Contribution Guidelines for Docs

Repository: ksctl/docs

How to Build from source

# Prequisites

npm install -D postcss

npm install -D postcss-cli

npm install -D autoprefixer

npm install hugo-extended

Install Dependencies

hugo serve

6 - Concepts

This section will help you to learn about the underlying system of Ksctl. It will help you to obtain a deeper understanding of how Ksctl works.

Sequence diagrams for 2 major operations

Create Cloud-Managed Clusters

sequenceDiagram

participant cm as Manager Cluster Managed

participant cc as Cloud Controller

participant kc as Ksctl Kubernetes Controller

cm->>cc: transfers specs from user or machine

cc->>cc: to create the cloud infra (network, subnet, firewall, cluster)

cc->>cm: 'kubeconfig' and other cluster access to the state

cm->>kc: shares 'kubeconfig'

kc->>kc: installs kubectl agent, stateimporter and controllers

kc->>cm: status of creationCreate Self-Managed HA clusters

sequenceDiagram

participant csm as Manager Cluster Self-Managed

participant cc as Cloud Controller

participant bc as Bootstrap Controller

participant kc as Ksctl Kubernetes Controller

csm->>cc: transfers specs from user or machine

cc->>cc: to create the cloud infra (network, subnet, firewall, vms)

cc->>csm: return state to be used by BootstrapController

csm->>bc: transfers infra state like ssh key, pub IPs, etc

bc->>bc: bootstrap the infra by either (k3s or kubeadm)

bc->>csm: 'kubeconfig' and other cluster access to the state

csm->>kc: shares 'kubeconfig'

kc->>kc: installs kubectl agent, stateimporter and controllers

kc->>csm: status of creation6.1 - Cloud Controller

It is responsible for controlling the sequence of tasks for every cloud provider to be executed

6.2 - Core Manager

It is responsible for managing client requests and calls the corresponding controller

Types

ManagerClusterKsctl

Role: Perform ksctl getCluster, switchCluster

ManagerClusterKubernetes

Role: Perform ksctl addApplicationAndCrds

Currently to be used by machine to machine not by ksctl cli

ManagerClusterManaged

Role: Perform ksctl createCluster, deleteCluster

ManagerClusterSelfManaged

Role: Perform ksctl createCluster, deleteCluster, addWorkerNodes, delWorkerNodes

6.3 - Distribution Controller

It is responsible for controlling the execution sequence for configuring Cloud Resources wrt to the Kubernetes distribution choosen

7 - Ksctl Components

Components

- ksctl agent

- ksctl stateimporter

- ksctl application controller

Sequence diagram on how its deployed

flowchart TD

Base(Ksctl Infra and Bootstrap) -->|Cluster is created| KC(Ksctl controller)

KC -->|Creates| Storage{storageProvider=='local'}

Storage -->|Yes| KSI(Ksctl Storage Importer)

Storage -->|No| KA(Ksctl Agent)

KSI -->KA

KA -->|Health| D(Deploy other ksctl controllers)7.1 - Ksctl Agent

It is a ksctl’s solution to infrastructure management and also kubernetes management.

Especially inside the kubertes cluster

It is a GRPC server running as a deployment. and a fleet of controllers will call it to perform certain operations. For instance, application installation via stack.application.ksctl.com/v1alpha, etc.

It will be installed on all kubernetes cluster created via ksctl from >= v1.2.0

7.2 - Ksctl Application Controller

It helps in deploying applications using crd to help manage with installaztion, upgrades, downgrades, uninstallaztion. from one version to another and provide a single place of truth where to look for which applications are installed

Types

Stack

For defining a hetrogenous components we came up with a stack which contains M number of components which are different applications with their versions

Info

this is current available on all clusters created by[email protected]Note

It has a dependency onksctl agentAbout Lifecycle of application stack

once you havekubectl apply the stack it will start deploying the applications in the stack, if you want to upgrade the applications in the stack you can edit the stack and change the version of the application and apply the stack again, it will uninstall the previous version and install the new version. Basically it performs reinstall of the stack which might cause downtimeSupported Apps and CNI

| Name | Type | Category | Ksctl_Name | More Info |

|---|---|---|---|---|

| Argo-CD | standard | CI/CD | standard-argocd | Link |

| Argo-Rollouts | standard | CI/CD | standard-argorollouts | Link |

| Istio | standard | Service Mesh | standard-istio | Link |

| Cilium | standard | - | cilium | Link |

| Flannel | standard | - | flannel | Link |

| Kube-Prometheus | standard | Monitoring | standard-kubeprometheus | Link |

| SpinKube | production | Wasm | production-spinkube | Link |

| WasmEdge and Wasmtime | production | Wasm | production-kwasm | Link |

Note on wasm category apps

Only one of the app under the category wasm can be installed at a time we you might need to uninstall one to get another running

also the current implementation of the wasm catorgoty apps annotate all the nodes with kwasm as true

Components in Stack

All the stack are a collection of components so when you are overriding the stack values you need to tell which component it belongs to and then specifiy the value in amap[string]any formatArgo-CD

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: argocd

spec:

stacks:

- stackId: standard-argocd

appType: app

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: argocd

spec:

stacks:

- stackId: standard-argocd

appType: app

overrides:

argocd:

version: <string> # version of the argocd

noUI: <bool> # to disable the UI

namespace: <string> # namespace to install argocd

namespaceInstall: <bool> # to install namespace specific argocd

Argo-Rollouts

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: argorollouts

spec:

stacks:

- stackId: standard-argorollouts

appType: app

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: argorollouts

spec:

stacks:

- stackId: standard-argorollouts

appType: app

overrides:

argorollouts:

version: <string> # version of the argorollouts

namespace: <string> # namespace to install argocd

namespaceInstall: <bool> # to install namespace specific argocd

Istio

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: istio

spec:

stacks:

- stackId: standard-istio

appType: app

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: istio

spec:

stacks:

- stackId: standard-istio

appType: app

overrides:

istio:

version: <string> # version of the istio

helmBaseChartOverridings: <map[string]any> # helm chart overridings, istio/base

helmIstiodChartOverridings: <map[string]any> # helm chart overridings, istio/istiod

Cilium

Currently we cannot install via the ksctl crd as cni are needed to be installed when configuring otherwise it will cause network issues

still we have cilium can be installed and only configuration available are version, we are working towards how can we allow users to specify the overridings in the cluster creation

anyways here is how it is done

we can consider using a file spec instead of cmd parameter, until that is done you have to wait

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: cilium

spec:

stacks:

- stackId: cilium

appType: app

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: cilium

spec:

stacks:

- stackId: cilium

appType: app

overrides:

cilium:

version: <string> # version of the cilium

ciliumChartOverridings: <map[string]any> # helm chart overridings, cilium

Flannel

Currently we cannot install via the ksctl crd as cni are needed to be installed when configuring otherwise it will cause network issues

still we have flannel can be installed and only configuration available are version, we are working towards how can we allow users to specify the overridings in the cluster creation

we can consider using a file spec instead of cmd parameter, until that is done you have to wait

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: flannel

spec:

stacks:

- stackId: flannel

appType: cni

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: flannel

spec:

stacks:

- stackId: flannel

appType: cni

overrides:

flannel:

version: <string> # version of the flannel

Kube-Prometheus

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: monitoring

spec:

stacks:

- stackId: standard-kubeprometheus

appType: app

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: monitoring

spec:

stacks:

- stackId: standard-kubeprometheus

appType: app

overrides:

kube-prometheus:

version: <string> # version of the kube-prometheus

helmKubePromChartOverridings: <map[string]any> # helm chart overridings, kube-prometheus

SpinKube

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: wasm-spinkube

spec:

stacks:

- stackId: production-spinkube

appType: app

Demo app

kubectl apply -f https://raw.githubusercontent.com/spinkube/spin-operator/main/config/samples/simple.yaml

kubectl port-forward svc/simple-spinapp 8083:80

curl localhost:8083/hello

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: wasm-spinkube

spec:

stacks:

- stackId: production-wasmedge-kwasm

appType: app

overrides:

spinkube-operator:

version: <string> # version of the spinkube-operator-shim-executor are same for shim-execuator, runtime-class, shim-executor-crd, spinkube-operator

helmOperatorChartOverridings: <map[string]any> # helm chart overridings, spinkube-operator

spinkube-operator-shim-executor:

version: <string> # version of the spinkube-operator-shim-executor are same for shim-execuator, runtime-class, shim-executor-crd, spinkube-operator

spinkube-operator-runtime-class:

version: <string> # version of the spinkube-operator-shim-executor are same for shim-execuator, runtime-class, shim-executor-crd, spinkube-operator

spinkube-operator-crd:

version: <string> # version of the spinkube-operator-shim-executor are same for shim-execuator, runtime-class, shim-executor-crd, spinkube-operator

cert-manager:

version: <string>

certmanagerChartOverridings: <map[string]any> # helm chart overridings, cert-manager

kwasm-operator:

version: <string>

kwasmOperatorChartOverridings: <map[string]any> # helm chart overridings, kwasm/kwasm-operator

Kwasm

How to use it (Basic Usage)

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: wasm-kwasm

spec:

stacks:

- stackId: production-kwasm

appType: app

Demo app(wasmedge)

---

apiVersion: v1

kind: Pod

metadata:

name: "myapp"

namespace: default

labels:

app: nice

spec:

runtimeClassName: wasmedge

containers:

- name: myapp

image: "docker.io/cr7258/wasm-demo-app:v1"

ports:

- containerPort: 8080

name: http

restartPolicy: Always

---

apiVersion: v1

kind: Service

metadata:

name: nice

spec:

selector:

app: nice

type: ClusterIP

ports:

- name: nice

protocol: TCP

port: 8080

targetPort: 8080

Demo app(wasmtime)

apiVersion: batch/v1

kind: Job

metadata:

name: nice

namespace: default

labels:

app: nice

spec:

template:

metadata:

name: nice

labels:

app: nice

spec:

runtimeClassName: wasmtime

containers:

- name: nice

image: "meteatamel/hello-wasm:0.1"

restartPolicy: OnFailure

#### For wasmedge

# once up and running

kubectl port-forward svc/nice 8080:8080

# then you can curl the service

curl localhost:8080

#### For wasmtime

# just check the logs

Overrides available

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: wasm-wasmedge

spec:

stacks:

- stackId: production-kwasm

appType: app

overrides:

kwasm-operator:

version: <string>

kwasmOperatorChartOverridings: <map[string]any> # helm chart overridings, kwasm/kwasm-operator

Example usage

Lets deploy [email protected], [email protected]

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: monitoring-plus-gitops

spec:

components:

- appName: standard-argocd

appType: app

version: v2.9.12

- appName: standard-kubeprometheus

appType: app

version: "55.0.0"

You can see once its deployed it fetch and deploys them

Lets try to upgrade them to their latest versions

kubeclt edit stack monitoring-plus-gitops

apiVersion: application.ksctl.com/v1alpha1

kind: Stack

metadata:

name: monitoring-plus-gitops

spec:

components:

- appName: standard-argocd

appType: app

version: latest

- appName: standard-kubeprometheus

appType: app

version: latest

once edited it will uninstall the previous install and reinstalls the latest deployments

7.3 - Ksctl State-Importer

It is a helper deployment to transfer state information from one storage option to another.

It is used to transfer data in ~/.ksctl location (provided the cluster is created via storageProvider: store-local).

It utilizes the these 2 methods:

Export: StorageFactory InterfaceImport: StorageFactory Interface

so before the ksctl agent is deployed we first create this pod which in turn runs a http server having storageProvider: store-kubernetes and uses storage.Import() method

once we get 200 OK responses from the http server we remove the pod and move to ksctl agent deployment so that it can use the state file present in configmaps, secrets

Warning

If the storageType is external (mongodb), we don’t need this to be happening instead we create kubernetes secret where the external storage solution environment variable is set and also we need to customize the ksctl agent deployment8 - Kubernetes Distributions

K3s and Kubeadm only work for HA self-managed clusters

8.1 - K3s

K3s for HA Cluster on supported provider

K3s is used for self-managed clusters. Its a lightweight k8s distribution. We are using it as follows:

- controlplane (k3s server)

- workerplane (k3s agent)

- datastore (etcd members)

Info

Here the Default CNI is flannel8.2 - Kubeadm

Kubeadm for HA Cluster on supported provider

Kubeadm support is added with etcd as datastore

Info

Here the Default CNI is flannel9 - Storage

storage providers

9.1 - External Storage

External MongoDB as a Storage provider

Refer : internal/storage/external/mongodb

Data to store and filtering it performs

- first it gets the cluster data / credentials data based on this filters

cluster_name(for cluster)region(for cluster)cloud_provider(for cluster & credentials)cluster_type(for cluster)- also when the state of the cluster has recieved the stable desired state mark the IsCompleted flag in the specific cloud_provider struct to indicate its done

- make sure the above things are specified before writing in the storage

How to use it

- you need to call the Init function to get the storage make sure you have the interface type variable as the caller

- before performing any operations you must call the Connect().

- for using methods: Read(), Write(), Delete() make sure you have called the Setup()

- for calling ReadCredentials(), WriteCredentials() you can use it directly just need to specify the cloud provider you want to write

- for calling GetOneOrMoreClusters() you need simply specify the filter

- for calling AlreadyCreated() you just have to specify the func args

- Don’t forget to call the storage.Kill() when you want to stop the complte execution. it guarantees that it will wait till all the pending operations on the storage are completed

- Custom Storage Directory you would need to specify the env var

KSCTL_CUSTOM_DIR_ENABLEDthe value must be directory names wit space separated - specify the Required ENV vars

export MONGODB_URI=""

Hint: mongodb://${username}:${password}@${domain}:${port} or mongo+atlas mongodb+srv://${username}:${password}@${domain}

Things to look for

make sure when you recieve return data from Read(). copy the address value to the storage pointer variable and not the address!

When any credentials are written, it will be stored in

- Database:

ksctl-{userid}-db - Collection:

{cloud_provider} - Document/Record:

raw bson datawith above specified data and filter fields

- Database:

When any clusterState is written, it gets stored in

- Database:

ksctl-{userid}-db - Collection:

credentials - Document/Record:

raw bson datawith above specified data and filter fields

- Database:

When you do Switch aka getKubeconfig it fetches the kubeconfig from the point 3 and stores it to

<some_dir>/.ksctl/kubeconfig

9.2 - Local Storage

Local as a Storage Provider

Refer: internal/storage/local

Data to store and filtering it performs

- first it gets the cluster data / credentials data based on this filters

cluster_name(for cluster)region(for cluster)cloud_provider(for cluster & credentials)cluster_type(for cluster)- also when the state of the cluster has recieved the stable desired state mark the IsCompleted flag in the specific cloud_provider struct to indicate its done

- make sure the above things are specified before writing in the storage

it is stored something like this

it will use almost the same construct.

* ClusterInfos => $USER_HOME/.ksctl/state/

|-- {cloud_provider}

|-- {cluster_type} aka (ha, managed)

|-- "{cluster_name} {region}"

|-- state.json

* CredentialInfo => $USER_HOME/.ksctl/credentials/{cloud_provider}.json

How to use it

- you need to call the Init function to get the storage make sure you have the interface type variable as the caller

- before performing any operations you must call the Connect().

- for using methods: Read(), Write(), Delete() make sure you have called the Setup()

- for calling ReadCredentials(), WriteCredentials() you can use it directly just need to specify the cloud provider you want to write

- for calling GetOneOrMoreClusters() you need simply specify the filter

- for calling AlreadyCreated() you just have to specify the func args

- Don’t forget to call the storage.Kill() when you want to stop the complte execution. it guarantees that it will wait till all the pending operations on the storage are completed

- Custom Storage Directory you would need to specify the env var

KSCTL_CUSTOM_DIR_ENABLEDthe value must be directory names wit space separated - it creates the configuration directories on your behalf

Things to look for

- make sure when you receive return data from Read(). copy the address value to the storage pointer variable and not the address!

- When any credentials are written, it will be stored in

<some_dir>/.ksctl/credentials/{cloud_provider}.json - When any clusterState is written, it gets stored in

<some_dir>/.ksctl/state/{cloud_provider}/{cluster_type}/{cluster_name} {region}/state.json - When you do Switch aka getKubeconfig it fetches the kubeconfig from the point 3 and stores it to

<some_dir>/.ksctl/kubeconfig